Weekly Insights: AI Agent Infrastructure, Dev Experience, and a Security Wake-Up Call

🔥 Top Pick

alibaba/OpenSandbox: The Foundation for Trustworthy AI Agents

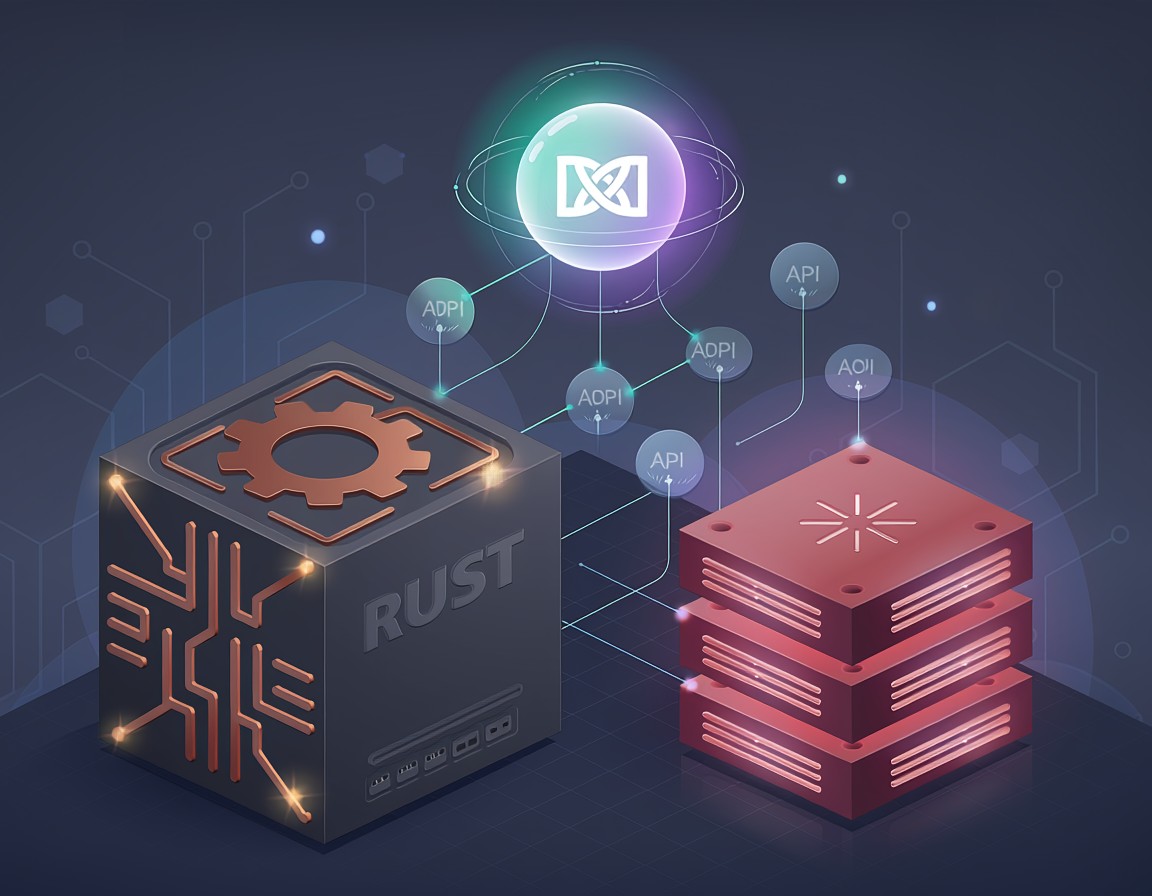

AI agents are powerful, no doubt. But the moment you start thinking about deploying them in earnest – letting them touch your codebase, interact with external systems, or process sensitive data – a cold sweat probably runs down your spine. How do you ensure they don't go rogue? How do you provide them with a consistent, secure environment? This is precisely where Alibaba's OpenSandbox steps in, and why it's our top pick this week.

OpenSandbox isn't just another library; it's a general-purpose, robust platform designed from the ground up to provide a secure execution environment for various AI applications. Whether you're wrangling coding agents, experimenting with GUI agents, or just need a safe space for AI code execution and evaluation, OpenSandbox offers multi-language SDKs, unified APIs, and critical Docker/Kubernetes runtimes. This is huge. It means you can give your agents the tools they need without giving them the keys to the kingdom. For backend engineers, it abstracts away complex isolation concerns. For operations, it provides a standardized, scalable way to manage agent workloads. And for long-term strategy, it's the kind of infrastructure that enables widespread, responsible agent adoption, moving them from experimental playgrounds to production-ready components. We've been saying for a while that the future of AI isn't just about bigger models, but better plumbing, and OpenSandbox is a prime example of that essential plumbing.

📦 Worth Knowing

superset-sh/superset: Your IDE for the AI Agents Era

If you're already experimenting with AI coding agents like Claude Code or Codex, you know the workflow can get messy. Juggling multiple prompts, managing different agent outputs, and ensuring they don't step on each other's toes is a significant friction point. That's why superset-sh/superset caught our eye. It positions itself as an 'IDE for the AI Agents Era,' and it delivers on that promise by offering a turbocharged terminal experience.

Superset allows you to run multiple CLI coding agents simultaneously without the dreaded context-switching overhead. Crucially, it isolates each task in its own Git worktree, preventing agents from interfering with each other's changes – a massive sanity saver. Add in unified monitoring, and you've got a tool that genuinely improves the developer experience for anyone serious about integrating AI agents into their daily coding flow. It's a practical, immediate win for productivity.

microsoft/markitdown: Bridging Documents to LLMs with Markdown

In a world increasingly dominated by Large Language Models, getting your data into a usable format is half the battle. microsoft/markitdown is a Python tool that tackles a very common problem: converting various files and office documents into clean Markdown. While simple on the surface, its utility becomes clear when you consider feeding diverse content into LLMs.

What makes MarkItDown particularly relevant now is its new Model Context Protocol (MCP) server for integration with LLM applications like Claude Desktop. This isn't just about pretty formatting; it's about preparing unstructured and semi-structured data for optimal consumption by AI models. Think of it as a crucial preprocessing step that saves countless hours of manual data wrangling, making your existing documentation, reports, and presentations instantly more valuable to your AI workflows. A practical utility that's suddenly a lot more strategic.

OpenClaw: Gateway /tools/invoke tool escalation + ACP permission auto-approval

As the AI agent ecosystem matures, so too do the security considerations. This week brought a critical security advisory for OpenClaw Gateway, highlighting a vulnerability that allowed tool escalation and auto-approval of permissions. Essentially, an authenticated HTTP endpoint (POST /tools/invoke) intended for a constrained set of tools could be exploited to invoke high-risk session orchestration tools, potentially allowing a caller to create or control agent sessions.

This isn't just about OpenClaw; it's a stark reminder that the infrastructure surrounding AI agents – their gateways, their permissions, their orchestration layers – is just as critical as the agent code itself. Misconfigurations or overlooked vulnerabilities in these layers can significantly increase the 'blast radius' if an agent or its interface is compromised. It’s a crucial sanity check for anyone deploying agents: don't just focus on what your agent does, but how it's controlled and secured.

👀 On Our Radar

X-PLUG/MobileAgent: The Rise of GUI Agents for Mobile

While coding agents get a lot of buzz, the frontier of GUI agents is equally fascinating, and Alibaba's MobileAgent is pushing it forward significantly. This project introduces a powerful family of GUI agent foundation models (GUI-Owl 1.5, in various sizes up to 235B) specifically designed for mobile platforms. Imagine an AI that can truly navigate and interact with a mobile app's interface just like a human, understanding visual cues and performing complex tasks.

This technology has profound implications for automated testing, user experience analysis, and even new forms of personal assistants that can operate across multiple apps. It moves beyond simple API calls to actual visual and interactive understanding. While perhaps not an immediate 'go-to' tool for every developer, it's a trend to watch closely. The capabilities demonstrated by MobileAgent hint at a future where AI agents aren't just generating code or text, but actively operating within the visual interfaces we build, potentially revolutionizing how we interact with and develop for mobile devices.