Step-by-Step Guides출처: DigitalOcean조회수 30

Sliding Window Attention: Efficient Long-Context Modeling

By Shaoni Mukherjee2026년 2월 21일

**Sliding Window Attention: Efficient Long-Context Modeling**

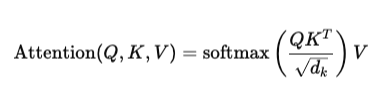

Introduction Modern language models struggle when input sequences become very long because traditional attention mechanisms scale quadratically with the sequence length. This makes them computationally expensive and memory-intensive. Sliding window attention is a practical solution to this problem. It limits how much of the sequence each token attends to by focusing only on a fixed-size local context, reducing both compute and memory requirements while still capturing meaningful dependencies. Instead of every token attending to every other token, sliding window attention allows each token to attend only to its nearby neighbors within a defined window...

---

**[devsupporter 해설]**

이 기사는 DigitalOcean에서 제공하는 최신 개발 동향입니다. 관련 도구나 기술에 대해 더 알아보시려면 원본 링크를 참고하세요.

Introduction Modern language models struggle when input sequences become very long because traditional attention mechanisms scale quadratically with the sequence length. This makes them computationally expensive and memory-intensive. Sliding window attention is a practical solution to this problem. It limits how much of the sequence each token attends to by focusing only on a fixed-size local context, reducing both compute and memory requirements while still capturing meaningful dependencies. Instead of every token attending to every other token, sliding window attention allows each token to attend only to its nearby neighbors within a defined window...

---

**[devsupporter 해설]**

이 기사는 DigitalOcean에서 제공하는 최신 개발 동향입니다. 관련 도구나 기술에 대해 더 알아보시려면 원본 링크를 참고하세요.